Monitor Containerized Applications with Datadog and Docker Compose

Proper monitoring is a critical part of keeping production systems running smoothly. This article shows how to use the Datadog agent to monitor a Rust application, with the Datadog API key retrieved from the Zero secrets manager.

Sam Magura

Production systems must be closely monitored if we are to prevent downtime and discover bugs before they get reported by an end user. While it may have been sufficient to log to a table in your SQL database back in the day, this approach leads to small silos of logs when working with a complex distributed system. In these cases, it is better to use a cloud monitoring solution which can aggregate logs and metrics from many sources. This article will demonstrate how to integrate with one such service, Datadog .

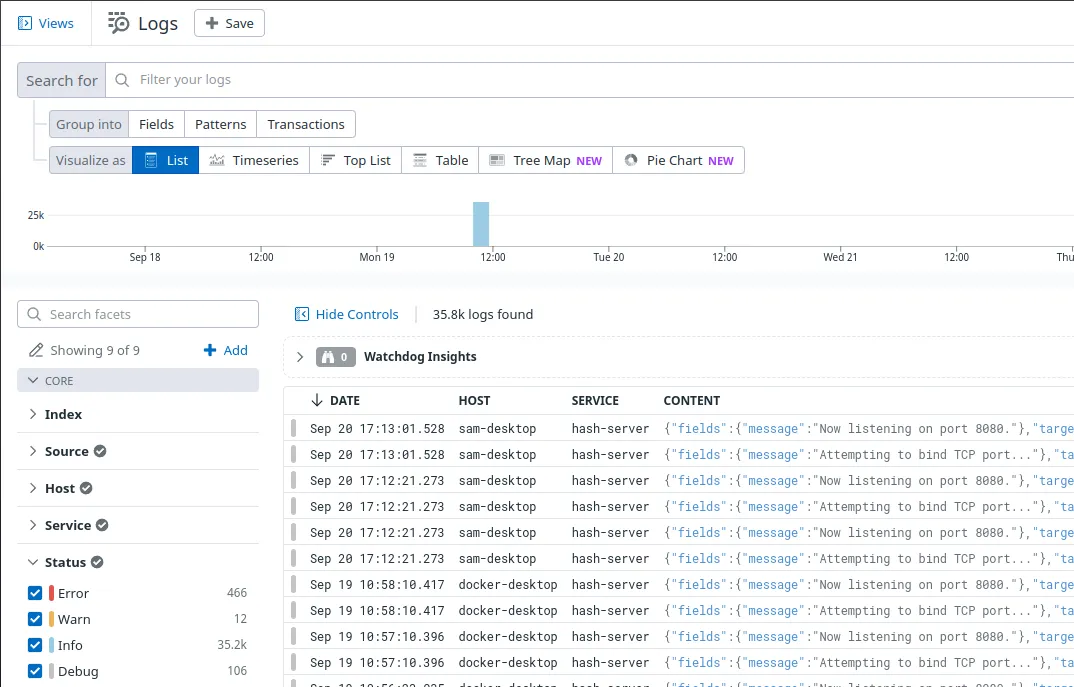

To integrate with Datadog, you'll need to collect logs from your application's processes and the servers they run on. To do this, you need to install and run the Datadog agent . Once the agent has captured logs, such as application events and CPU / memory usage metrics, the logs are synced to the cloud and can be viewed from the Datadog portal. The portal provides powerful tools for searching logs and analyzing trends in your data:

Perhaps the most convenient way to run the Datadog agent locally is as a Docker container. We'll use Docker Compose to run the Datadog agent container alongside our application's container. The application will log to stdout when certain events occur, and the agent will read the logs and upload them to the cloud.

The thing that makes this example unique is that we'll fetch the Datadog API key from the Zero secrets manager by building a custom Docker image on top of the official Datadog agent image.

🔗 The full code for this example is available in the zerosecrets/examples GitHub repository.

Writing an Application to Monitor

We'll write a simple TCP server in Rust so that we have something to monitor. When the TCP server receives data from a client, it should send the SHA-256 hash of the data back over the socket. Both the client and server will be written using Tokio , a popular library for writing reliable networked applications in Rust.

Run cargo new hash-server to initialize the project for the server. The server code will be very similar to the "Hello World" example shown in the Tokio README, except we will...

- Use the sha2 crate to compute the SHA-256 of the input.

- Use the tracing crate to log events to

stdout. The JSON format is used so that Datadog can extract the timestamp, log level, and message from the output.

See here for the full code.

Now start up the server with cargo run. It will print various logs to the console, such as

This is what we want to capture with Datadog!

To test the hashing functionality, we can send raw text to the server using curl and the telnet protocol:

You should get back a hash beginning with 4acf.

Secure your secrets conveniently

Zero is a modern secrets manager built with usability at its core. Reliable and secure, it saves time and effort.

Containerizing the Server

While running the hash-server binary directly is fine for local development, deploying the application as a Linux container is more ideal for production environments, since the container will work the same on any host system with a compatible container runtime.

Containerizing a Rust application is extremely straightforward — simply drop the following Dockerfile into the hash-server directory:

This Dockerfile employs a standard two stage build so that we get the benefits of compiling inside the container without including the Rust build tooling in the final image.

The image can be built with

and run with

The --init flags allows us to stop the server by pressing Ctrl+C.

Getting the Datadog API Key from Zero

Before proceeding, sign up for a free trial of Datadog . You'll be given an API key and prompted to run the Datadog agent as part of the sign up flow. For now, just copy the API key — we'll run the agent to complete the sign up process at the end of this section. To add the API key to Zero, create a new Zero token and click "Add secret". Then select Datadog from the dropdown.

The Datadog agent container expects to be passed a DD_API_KEY environment variable so it can report back to the Datadog cloud. To get the API key from Zero and pass it to the agent, we need to write a script that runs before the agent starts up and fetches the key from Zero. To keep things encapsulated, the script should run inside the container — this way, there aren't any extra steps required to run the container.

Be careful that your Docker image does not contain either the Datadog API key or the Zero token. Secrets can be extracted from images, so it's more secure to pass the secrets in when running the container.

My first attempt at this used a Bash script to call the Zero GraphQL API . While sending an HTTP request from Bash is relatively straightforward with curl, parsing the JSON response from the API is not.

My second attempt was to rewrite the script in Python — Python is perfect for quickly writing scripts when you need the power of a "real" programming langauge. The Datadog image already has a recent version of Python, so all we need to do is install the Zero Python SDK .

Here's the finished script:

The script should be placed in a datadog-agent directory along with a Dockerfile that sets Python as the entrypoint:

Now you can build and run the agent container with:

If it worked, the Datadog website will show that the agent is reporting:

To stop the container, determine the container ID with docker ps and then kill it with docker kill <CONTAINER-ID>.

Connecting it All Together

The final step is to run hash-server and the Datadog agent at the same time, and instruct the agent to read the hash-server logs from stdout. This can be accomplished by adding a docker-compose.yml file to the project's root. Our compose file is based off of the one from Datadog's docs :

The com.datadoghq.ad.logs label on the hash-server service instructs Datadog to monitor the hash-server container. The agent can also be configured to monitor all containers on the host system.

Start up Docker Compose with

If tweaking any of the source code, make sure to pass the --build option to ensure that Docker Compose rebuilds the images. Now, run the client via cargo run and enter a string. You should receive its hash as before.

If you open the Datadog website in your browser and select "Logs > Search" from the menu on the left, you should see logs from hash-server!

This page has tons of filter & search features to help you locate specific logs. For example, uncheck all the boxes under "Status" except for "Warn" to only see warnings.

Wrapping Up

This article showed how to collect logs from a containerized Rust TCP server via Datadog, with the Datadog API key fetched using the Zero Python SDK.

The technique we used to pass the Datadog API key to the Datadog agent's entrypoint is quite general — this pattern can be used any time you need get a secret from Zero into a 3rd party container.

Thanks for reading and I hope to see you in the next one!

Additional Resources

Other articles

Using DocuSign to Request Signatures within your App

Kickstart your integration with the DocuSign eSignature API with this guide.

Prototyping an Ecommerce Application using Braintree and Mailchimp

This quick guide will show you how to integrate multiple 3rd party services into a single flow using Zero.

Secure your secrets

Zero is a modern secrets manager built with usability at its core. Reliable and secure, it saves time and effort.